|

The introduction of this loss GREATLY, GREATLY improves the convergence speed, by enforcing the encoder to preserve the identity of generated character, narrowing down the possible search space.įinally total variation loss is included, but from an empirical point of view, it doesn’t do much visible to improve the quality of generated images. It follows a simple idea: the source and generated character should resemble the same character, thus they must appear close to each other in the embedded space as well.

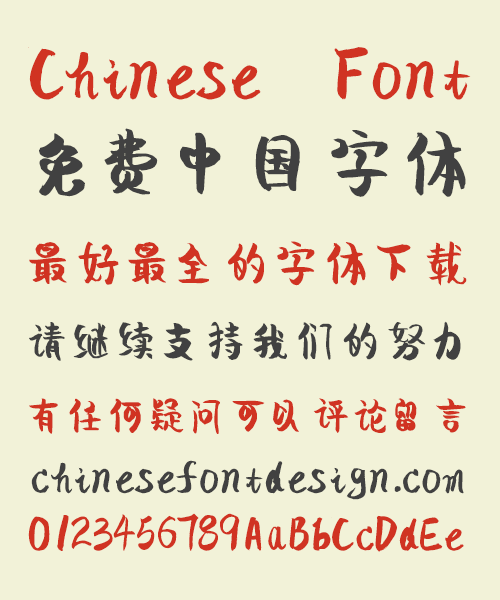

Stolen from the AC-GAN model, the multi-class category loss is added to supervise the discriminator to penalize such scenarios, by predicting the style of the generated characters, thus preserving the style itself.Īnother important piece of the model is the constant loss borrowed from DTN network. However, a new problem emerges: the model starts to confuse and mix the styles together, generating characters that don’t look like any of the provided targets. With category embedding, we now have a GAN that can handle multiple styles at the same time. By doing this, encoder still maps same character into the same vector, the decoder, on the other hand, will take both the character and style embeddings to generate the target character. Inspired by Google’s zero-shot GNMT paper, the introduction of category embedding solves this by concatenating a non-trainable gaussian noise as style embedding to the character embedding, right before it goes through decoder. Vanilla pix2pix model doesn’t handle such one-to-many relationship out-of-box. Now we have a problem that same character could appear in multiple fonts.

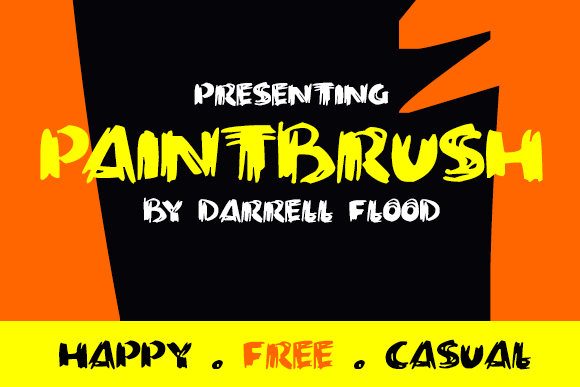

Category Embedding for One-to-Many Modeling The decoder could also learn different ways of writing the same radicals from other fonts.īy training multiple fonts together, it enforces the model to learn from each one of them then use the learned experience to improve the rest of the crowd.The encoder gets exposed to many more characters, not only limited to one target font, but from all the fonts combined.Modeling multiple styles concurrently has two major benefits: Thus it is essential to enable to model to learn multiple font styles at the same time. To reflect this, it is important to make the model not only aware of its own style, but other fonts’ styles as well. Imagine a human designer work on a new typeface, they are definitely not learning the alphabet from scratch! Real world designers go through years of training to understand the structure of the letters/characters and the fundamental principles, before they could design a font on their own. The structure of Encoder, Decoder and Discriminator are directly borrowed from the pix2pix, specifically the Unet model, which are detailed in the original paper’s Appendix section Intuition The network structure is illustrated below. Unsupervised Cross-Domain Image GenerationĪs its name hints, the zi2zi model is directly derived and extended from the popular pix2pix model.Conditional Image Synthesis With Auxiliary Classifier GANs.Image-to-Image Translation with Conditional Adversarial Networks.TL DR: It is a conditional generative adversarial network, combined from 3 awesome papers, with a little additional spice: On a more personal note, it also serves as a playground for me to tap into the wonderland of GAN and its variants. That is how zi2zi comes into being, an attempt to solve the above problems in one blow, this time blessed with the mighty power of GAN. Limited to learn and output only one target font style at a time.The generated images are oftentimes blurry.As an experimental attempt it does fulfill its purpose, but some big issues remain: It is a pleasant surprise that the Rewrite project gets a fair amount of attention and interests, however, looking back, the result feels underwhelming. Zi2zi is the follow-up work for my last project, once again tackling the same problem of style transfer between Chinese fonts.

Related code can be found here Motivation

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed